BioAssay Express (BAE), the New Tool to Make Unstructured Protocol Data Structured: Use Cases for Assay Informatics

1. INTRODUCTION

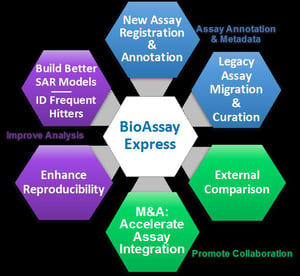

Drug discovery bioassay protocols are documented in a wide variety of styles of Scientific English, describing the biological significance of these experiments. Therefore, traditionally, this information can not be readily exploited directly by computational and “Big Data” techniques to extract its inherent value. The BioAssay Express (BAE) platform provides two major tool sets to address this challenge – patented semi-automated mark-up tools and novel Analysis & Visualization tools.

Here are the major use case for the patented semi-automated mark-up tools and the novel Analysis & Visualization tools with specific benefits described below:

2.1) Assay Annotation with Metadata,

2.2) Improve Analysis, and

2.3) Promote Collaboration.

2. BAE Use Cases By Area

2.1. Assay Annotation with Metadata: Enable Data Mining

Purpose

- Encode standard, semantic/computer-readable terms - metadata – based on your assay protocol descriptions

- Mark-up, protect, and make useful the wealth of scientific information that your organization is sitting on today

- Prepare for subsequent scientific analysis, collaboration, business monetization activities.

Benefits

- Unlock years-to-decades of data currently unactionable by computer analyses for your organization

- Annotate each assay once – gain value from it forever

Time-To-Value

- 1-2 Quarter project

2.1.1. Historic ('Legacy') Assay Migration & Curation

In marketing interviews by Curlew Research, most companies described their challenge of capturing controlled terminologies for bioassays applied to their backlog of assays as well as just new or modified assays being registered. Their libraries of legacy assays in some cases exceed 20,000 protocols. Here are some quote from Senior Informaticians at Top 10 pharma interviewed by Curlew Research:

- “In the bioassay world if the descriptions are not well captured - the corporate knowledge can disappear”

- “With better assay annotation, you can confidently reuse the data and avoid having to retest”

CDD has unique, patented BioAssay Express technologies and battle proven services (applied to 3500 "best of Pubchem MLPCN assays as a strong POC and unique publicly annotated data resources). Contact info@collaborativedrug.com for BAE quotes.

2.1.2. New Assay Registration

Nine out of ten large pharmaceutical companies interviewed by marketing firm Curlew Research in early 2017 identified an urgent need to upgrade their internal bioassay registration systems to include encoding with controlled terminologies to leverage metadata and derive more value from internal screening campaigns - but they are struggling to decide how to accomplish this task effectively. Again, here are direct quotes (anonymized of course) from top informaticians at big pharma highlighting the severity of the pain marking up this data without the help from Bioassay Express technologies:

- "Getting scientists to register their assays without threatening to fire them if they didn’t comply”.

- “If we are ever going to make sense of the ever more complex assays we are running we will need more metadata”

- "Consistent assay metadata would release a lot of untapped benefit and value”

2.1.3. Convert Annotated Assays to Text (planned for BAE 2.0)

Our roadmap for ‘BAE 2.0’, which includes expanding templates from summary information to detailed steps, also includes developing this “annotation-to-text” feature. The goal would be to generate methods sections in standardized formats (e.g. for scientific publications, coded descriptors for online data depositions, or assay project workflow schema), by translating annotations back to natural language text (English) with a controlled terminology and the appropriate (selectable) format.

2.2. IMPROVE ANALYSIS

Benefits

- Enhanced opportunities to ask novel questions, perform queries previously not possible

- Improved in silico models save time and resources in SAR campaigns

- Flagging hits that are potentially experimental artifacts (aka ‘frequent hitters’) saves time and resources

- Easily searching, and finding similar, assays avoids unnecessary assay duplication and promotes reproducibility

2.2.1. Make All Assays Easily Discoverable

In short, having assay protocols annotated with semantic terms allows all members of an organization to access, use, and learn from the vast institutional knowledge already accumulated.

2.2.2. Build Better SAR Models

Once an organization has used the BAE curation tool to produce a set of well-annotated assays, the BAE analysis and visualization tools enable novel ways to analyze structure-activity relationships (SAR). Searching for assays involves specifying a set of annotations and starting the search, which retrieves a list of assays ranked in decreasing order of similarity.

One can drill down to the details and visualize the related assays as a grid (see example at right) of assays on the x-axis and assay properties (annotation terms) on the y-axis. Again, several quotes senior scientists at big pharma capture bring home why this is critical:

- “The better decisions are the most informed ones; the more you know about the assay the more it informs your decision”

- “Having more data about an assay enables you to ask different questions”

2.2.3. Frequent Hitter Analysis

A recent ACS editorial (Aldrich et al. The Ecstasy and Agony of Assay Interference Compounds. ACS Central Science 2017. 3:143) highlights the high rate (80-100%) of initial screening hits that stem from experimental artifacts. It clearly is in an organization's interest to identify such artifacts (or 'frequent hitters') as early as possible in the drug discovery process, to avoid expending valuable resources on these dead ends. A study published by AstraZeneca (Zander et al. Using the BioAssay Ontology for analyzing high-throughput screening data. J Biomol Screen 2015. 20:402) demonstrated the benefit of detecting such artifacts by analysis of assays annotated with BioAssay Ontology (BAO) terms.

The BAE analysis tool can be used to directly and quickly identify frequent hitters via several approaches. For each month delay in identifying a frequent hitter, not only are significant resources wasted on such artifacts but resources are often not being focused on compounds with the greatest potential. A conservative estimate of potential savings impact is 1 FTE-month saved for each month of earlier detection per compound.

2.2.4. Reduce Duplication and Enhance Reproducibility

Many times have we heard the comment ‘Unless I did it myself, it’s often easier to repeat an assay than to try to find it in our database’. Bayer reported that only 25% of published preclinical studies could be validated and that inconsistencies between published findings and the company’s own results caused delays or cancellations in ~2/3 of projects (Prinz et al. Believe it or not: how much can we rely on published data on potential drug targets? Nature Rev. Drug Disc. 2011. 10:712). Amgen reported that scientific findings could be confirmed in only 11% of 53 landmark studies (Begley & Ellis, Drug development: Raise standards for preclinical cancer research. Nature 2012. 483:531). A recently reported replication effort (eLife 2017;6:e23693, funded by a $2M grant from the Laura and John Arnold Foundation) of five cancer biology studies found 2 replicated, one failed, and two were uninterpretable. Irreproducibility of preclinical studies has gained widespread attention.

Benefits of improving the reproducibility include:

- Flagging differences between closely related assays

- Correlating differences in protocols with differences in results

- Improving the reproducibility of experiments conducted in different laboratories

- Identifying root causes of divergences

2.3. PROMOTE COLLABORATION

Benefits

- Simply combine datasets from two different institutions (e.g., in-house with a CRO or another company from an M&A)

- Publicly annotated data can be imported and mixed with private content

2.3.1. External Comparison

The trend toward outsourcing ever greater amounts of the early stage drug discovery pipeline presents new challenges in integrating and comparing these external data with internally-generated data. As a Senior Cheminformatician from top 10 Pharma told the market research firm Curlew Research, "One of our biggest challenges now is the gap between analyzing internal and external together".

BAE enables researchers to intelligently and efficiently search across all annotated assays.

2.3.2. Assay Informatics for Collaborative, Public/ Private Research

There is an increase in public-private partnerships around early stage drug discovery, particularly neglected diseases (e.g., TB Alliance, Medicine for Malaria Venture (MMV), CARB-X). These collaborations require best practices for sharing data and resources across sites, countries, and disciplines to enhance discovery and reduce duplication.

CDD's core product, CDD Vault, is already used by many of these global consortia to manage their screening data, so CDD is well-positioned, and actively encouraging these groups, to adopt assay annotation using BAE. With public repositories such as PubChem and ChEMBL also keenly interested in working with CDD to expand semantic annotation of deposited assays, there is clear momentum building. Early BAE adopters will benefit from not only being able to incorporate these public or collaborative data into their analyses, but they could play a leading role in this new field of 'assay informatics'.

2.3.3. Mergers and Acquisitions (M&A)

One well-known challenge for any pharma M&A is integrating the smaller company's assay data into the bigger (or equal sized) organization's data management system. Despite intensive due diligence to establish the value and reliability of the assay data (and hence justify the multi-million-dollar investment), these efforts evaluate those data in isolation and do not factor in the feasibility or logistics of integration. Scientists from both parties are typically needed to find, read and assess the assay protocol, and painfully work with informatics specialists to really understand the data with context.

In contrast, if the pharma company uses BAE for their internal assays, they have a clear process already established for capturing critical assay metadata. Upon merger/ acquisition, they could immediately use BAE to assign annotations, import compounds, readouts and annotations. For legacy assays, CDD can help accelerate this process by providing 1-time curation services.

Using BAE to help integrate acquired data assets can not only save an organization time (the task could be completed in days-to-weeks instead of months-to-years) and enhance the value of those now discoverable drug discovery assets.

This blog is authored by members of the CDD Vault community. CDD Vault is a hosted drug discovery informatics platform that securely manages both private and external biological and chemical data. It provides core functionality including chemical registration, structure activity relationship, chemical inventory, and electronic lab notebook capabilities.

CDD Vault: Drug Discovery Informatics your whole project team will embrace